Below is a link to my entry on

RateMyProfessors.com.

I'd just like to make a couple of suggestions first:

- When you rate one instructor, rate all of your instructors at the same

same. Most of the motivation for people to do ratings comes from either

reverence or loathing, so the ratings will tend to be extreme. If

you rate the middle-of-the-road ones as well, it will help to make

things more balanced.

- Include specific comments, either good or bad, to support your

ratings. There are many different reasons why you might consider someone a

good or a bad teacher, and knowing the particulars helps other people to

understand. It also helps the instructor improve and/or keep doing the

good things.

Since I'm a lab instructor, it probably makes sense that my comments are

about how to make the

data which ratings represent more

useful. I think ratings like this can be valuable, especially if people

doing them make a little effort to make their input meaningful.

(Oh, and one more point: if your spelling and grammar are good, it makes

your comments carry more weight. If you're trying to

question someone else's competence,

and you can't produce a correct sentence yourself, your

credibility isn't great.)

Here's the link to

my rating.

Here's another site, but it doesn't get

nearly as much

traffic. (I'm not even on there as of June 2009).

Here's the link to

WLU on

ProfessorPerformance.com.

If there are any more you think I should know about,

let me know.

Here's a fascinating link (from RateMyProfessors) about

looks and ratings.

Here's a link on how profs can

chemically improve their ratings.

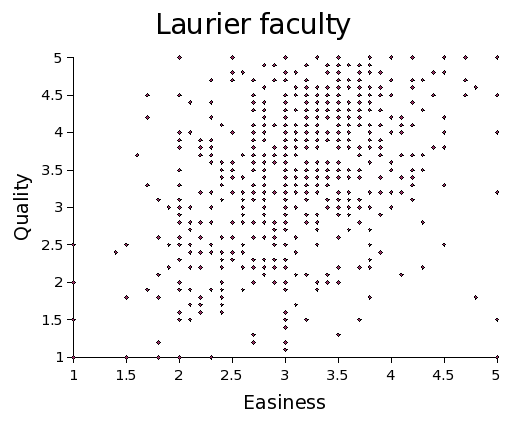

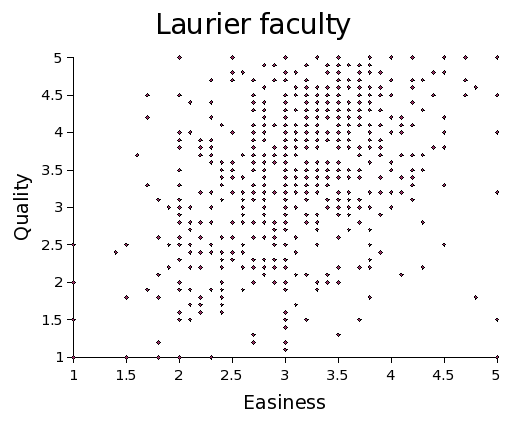

Here's a bit of research I did; I took the ratings for

all

instructors listed for Laurier (on December 5, 2005), and did a least

squares fit of quality as

a function of easiness. I found

- slope = 0.58 +/- 0.05

- y - intercept = 1.71 +/- 0.15

Thus if you take a person's score for easiness, their predicted quality

would be q= 0.58*(easiness) + 1.71.

The graph looks like this:

Anyone whose rated quality is higher than that must be doing something

well. (Incidentally, my rated quality is

below, for what it's

worth.)

You could be a bit more discriminating than that; if you include the

standard errors, you can make three categories:

- low; where rated quality is below

q= 0.53*(easiness) + 1.56, which is the lower bound with the errors

- high; where rated quality is above

q= 0.63*(easiness) + 1.86, which is the upper bound with the errors

- medium; in between the two above

This makes a bit of difference from the categories used by the website,

which is

- low; where rated quality is below 2.5

- high; where rated quality is above 3.5

- medium; where rated quality is between 2.5 and 3.5

For instance, if you get rated 1 for easiness, (ie. fire-breathing),

if you get a rated quality of 2.49 or above you're doing well.

On the other hand, if you get rated 5 for easiness, (ie. give marks away

like Santa Claus), then rated quality of 4.5 is really still mediocre.

The philosophical question this raises is whether being easy gets a higher

quality rating, or whether being of good quality gets a higher easiness

rating. (Can you even distinguish those???)